It’s the Platform, Not the Model

Why the GenAI race has moved above the model layer and what it means for your strategy

The Commodity Paradox

Something quietly remarkable happened in 2025: the most advanced AI models in the world started to look alike. Not identical, but close enough that picking between them began to feel less like a strategic technology decision and more like choosing a brand of coffee. The beans are roughly the same (and I know, beans are never the same, roasting etc., but let’s keep it simple); you’re really choosing the cup, the barista and the “café ecosystem” around it (how easy to get there, nice area, weather, etc.).

This is the commodity paradox of generative AI. After some wild competitive years to build ever-larger, ever-more-capable models, the frontier has converged. Benchmarks cluster. Prices have collapsed. And the companies building these models are now competing ferociously on something entirely different: the platforms, tools, protocols, and ecosystems that surround them.

“Models by themselves are not sufficient for a lasting competitive edge. OpenAI is not a model company; it’s a product company that happens to have fantastic models.” — Satya Nadella, Microsoft CEO, 2025

I want to give a simple but consequential argument: in the GenAI era, the model is the CPU. The platform is the computer. Nobody buys a CPU anymore; they buy a system. And the same logic now applies to artificial intelligence.

Models Are Converging

Benchmark Parity at the Frontier

ChatGPT, Claude and Gemini trade positions across benchmarks, same for Kimi, Qwen, DeepSeek and so on. Each shows dominance in some dimension, none dominant across all.

One 2025 LLM comparison study summarized the situation plainly: “With a few exceptions, Anthropic and OpenAI’s flagship models are essentially at parity, meaning your choice of any particular LLM should focus on specialized features rather than raw intelligence.”

So, benchmark scores are no longer a reliable strategic differentiator.

And open-source models have accelerated this convergence dramatically. DeepSeek-V3 matched frontier proprietary models at a reported training cost of under $6 million. Llama 4 and Qwen3 compete credibly at the top of public leaderboards. The existence of capable, free, self-hostable models has altered the power dynamic. No lab can maintain a lasting performance lead when its architecture and training recipes can be approximated by well-resourced competitors within months.

The question is, why then still use commercial ones? Well, with open-source models, you have to build and integrate your own ecosystem. Tools like Claude Code or Gemini's all-in-one solution are too easy to use and too effective at solving your problem.

More voices see it like this, too

The commodity thesis is no longer a fringe contrarian view.

In December 2025, during Microsoft’s AI Tour in Mumbai, Satya Nadella said: “There are lots of capable models available today. The question is how you bring those capabilities together.” He highlighted the concept of ‘context engineering’ as the real competitive edge. The performance of an AI application, he argued, is “directly correlated to how well your data is brought into context.” But what he meant is the ability to ground AI systems in enterprise data, workflows, and relationships.

Thomas Wolf of Hugging Face has articulated the same transition differently: the focus is shifting from models to the systems that leverage them. He draws an analogy to the early internet: in the beginning, building a website was itself the business; today, companies build internet-native businesses by integrating web capabilities into broader systems. The underlying technology became nearly free. The value moved to the application layer and how it plays with other systems together.

I attended the Bits&Pretzels in Munich 2025, where Mistral AI's CRO, Marjorie Janiewicz, was talking in an AMA about how the platform and the services, in addition to the model, provide the most value to customers. Rather than everyone having to build and invent the whole system, it makes sense to come up with the entire tool stack with all batteries included.

Building the Platforms is now the Strategy

If the model is no longer the moat, what is? The answer is playing out in real time across every major AI lab: they are racing to build platforms and ecosystems of tooling, integrations, agent infrastructure, and developer experiences that lock in users at a layer above the model itself.

OpenAI: From Model API to Agentic Operating System

OpenAI’s headline for the year was not a model milestone, it was: “2025 wasn’t about a single model launch. It was the year AI got easier to run in production.”

The platform buildout was comprehensive. The Responses API, launched in March 2025, gave developers built-in tools for web search, file search, computer use, all accessible through a single API call. The Agents SDK (available in Python and TypeScript) provided building blocks for multi-agent orchestration, handoffs, guardrails, and tracing and was deliberately made provider-agnostic, able to run non-OpenAI models. AgentKit added higher-level capabilities: an Agent Builder, ChatKit, a Connector Registry, and evaluation loops for teams wanting to ship faster.

The Codex CLI brought agent-style coding into local environments. So developers could run AI directly over real repositories, apply changes iteratively, and keep humans in the loop for long-horizon tasks. By mid-2025, reasoning capabilities were no longer a separate product family but folded into the mainstream GPT-5 line. The strategic message was clear: the model is a component; the platform is the product.

To put a big black bold line under this agentic operating system thinking, OpenAI acquired OpenClaw/MoltBot (whatever its name now) and its inventor. OpenClaw is an autonomous agent that can execute tasks via large language models, using messaging platforms as its main user interface.

Anthropic: Protocol as Platform

Anthropic’s most consequential strategic move in 2024–2025 had nothing to do with model capability. It was the release, in November 2024, of the Model Context Protocol (MCP), an open standard for connecting AI agents to any data source, tool, or application.

Anthropic’s Chief Product Officer Mike Krieger called MCP the “USB-C interface of the AI field”: a single standard connector that eliminates the need for custom integrations between AI systems and the countless databases, APIs, and services they need to interact with. The protocol spread with extraordinary speed. Within one year of release: over 97 million monthly SDK downloads, more than 10,000 active MCP servers deployed, and first-class support integrated into Claude, ChatGPT, Gemini, Microsoft Copilot, Visual Studio Code, Cursor, and dozens more.

“The main goal is to have enough adoption in the world that it’s the de facto standard. We’re all better off if we have an open integration center where you can build something once as a developer and use it across any client.” — David Soria Parra, MCP co-creator, Anthropic

In December 2025, Anthropic donated MCP to the Agentic AI Foundation (part of the Linux Foundation, the same institution that stewards Kubernetes, PyTorch, and Node.js).

This was not only an act of charity. It was a declaration that MCP is infrastructure: the neutral plumbing layer on which the entire agentic AI ecosystem should be built, owned by no single vendor.

Google: Agent-Ready & Hardware Independent by Design

Google’s platform strategy is grounded in its unique position: it controls more entry points to end users (Search, Gmail, Android, Chrome, Workspace) than any other technology company on earth. The AI strategy follows naturally: make all of Google’s services accessible to AI agents, and make Google’s AI ecosystem the place developers build.

In December 2025, Google launched fully managed, remote MCP servers for its Google and Cloud services like Maps, BigQuery, and more. Steren Giannini, Product Management Director at Google Cloud, framed the strategy in plain terms: “We are making Google agent-ready by design.” The servers are protected by Google Cloud IAM and Model Armor, with full audit logging, recognizing that enterprise adoption requires governance as much as capability.

In my opinion, it's also important to mention that Google is, in comparison to the others, very robust and uniquely placed against the AI hype, too. With its own hardware designs especially for AI workload (TPUs) and its own excellent AI forethinker lab with DeepMind, it doesn’t need to participate in this huge money shift around games.

Last, Google’s NotebookLM is perhaps the clearest product-first model: built around Gemini from the beginning, with a 1-million-token context window, audio and video overviews, grounded document search, and tools that make the AI output inherently more useful than raw model output. It illustrates the ‘LLM as long-context research engine’ pattern that will define the next generation of AI products.

Mistral AI: Sovereignty as a Platform Differentiator

Mistral AI, the Paris-based startup founded in 2023 by former Meta and Google DeepMind researchers, offers perhaps the most instructive case study in the platform-over-model thesis. It entered the race without the deep pockets of its American rivals, and has been forced to compete on platform strategy from day one.

Mistral’s CEO Arthur Mensch says: the company’s goal is to enable enterprises and governments to “customise the technology and make it their own so they can control it without external dependencies.”

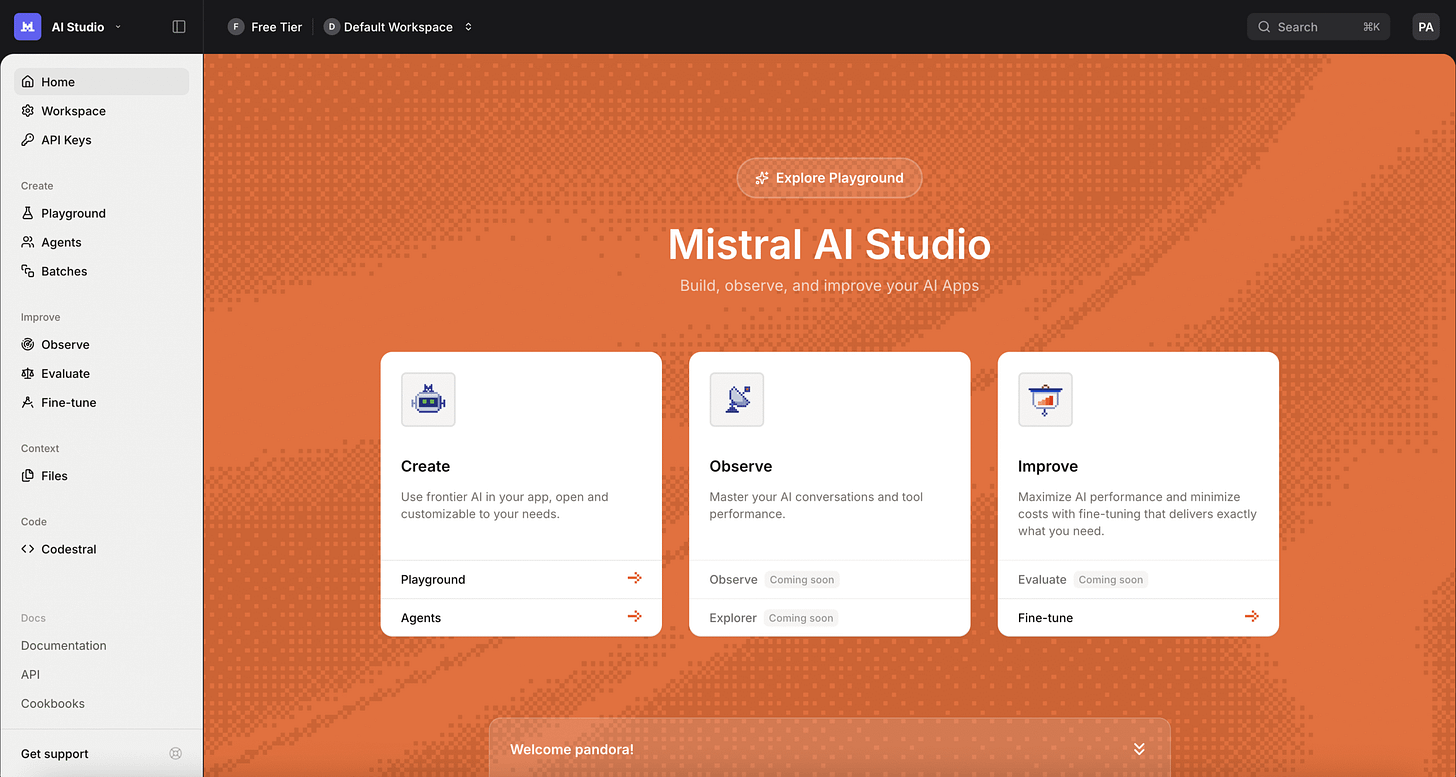

In October 2025, Mistral launched Mistral AI Studio — replacing its earlier La Plateforme offering with a production-grade environment that directly addresses the gap between AI experimentation and enterprise deployment. Studio integrates four layers in a single platform: a Runtime for executing models and agents; an Observability layer for monitoring and auditing outputs; a Registry for governing model versions and tracing outputs back to source components; and a Workflow Builder (in beta as of December 2025) for building multi-step, automatable AI processes without custom scripting. MCP support was announced as an upcoming integration, connecting Studio to enterprise systems and custom tools.

“The real bottleneck is software. What’s missing are the tools required to supervise, verify, and secure the agent’s actions. We have issues of observability, compliance, and control.”

— Timothée Lacroix, CTO & Co-founder, Mistral AI

The strategic logic Mistral embodies is the clearest expression of the platform thesis from a challenger perspective: when you cannot out-spend OpenAI or Google on model training, you compete on the layers they find harder to replicate:

deployment sovereignty

European regulatory alignment

on-premises control

applied integration expertise

Mistral is not selling a model. It is selling the assurance that AI can be deployed on your terms, in your infrastructure, under your governance.

Where Models Still Differ: User Taste

To be clear: models are not entirely identical. Meaningful differences remain, in style, personality, and areas of specialization. But these differences have become matters of preference rather than capability gaps.

Claude is consistently described as having “the most soul” in writing with producing vivid character development, immersive world-building, and narratives with emotional depth. ChatGPT’s GPT-4o is praised for its comprehensive toolkit, persistent memory across conversations, and superior instruction-following for creative and visual tasks. Gemini 3 Flash is favored for speed and cost efficiency, particularly in multimodal workflows deeply integrated with Google services. Grok from xAI wins on access to real-time information. DeepSeek wins on price-to-performance for cost-sensitive deployments.

These are not trivial distinctions. They are analogous to choosing between television brands. The underlying panel technology is essentially the same. The brand experience, ecosystem integration, and software capabilities determine the choice. The competition has moved from the chip to the system.

What This Means for Enterprises and Developers

The platform-over-model reality has concrete strategic implications that many organizations have yet to fully internalize.

Evaluate Platforms, Not Just Models

When selecting an AI vendor or infrastructure, benchmark scores should not be the primary criterion. Instead, evaluate:

the richness of the platform’s tooling and integrations

the maturity of its agentic capabilities

support for local and on-premise deployment

fine-tuning and customization options

governance, compliance, and audit capabilities

the openness and interoperability of its ecosystem

Finally, your Data Is the Moat

The single most important message from industry leaders is consistent: competitive advantage in the AI era comes from how effectively enterprise data and context are brought into AI systems. The model is a commodity; the grounding of that model in proprietary knowledge, workflows, and relationships is not.

This means the strategic priority should be building robust data infrastructure with clean, accessible, well-governed enterprise data, rather than chasing the latest model release. The team that can most effectively bring its institutional knowledge into AI context will outperform the team with the nominally better model.

The PC Moment for AI

History has a pattern for this. The transistor commoditized. The CPU commoditized. The operating system commoditized. The cloud server commoditized. In each case, the initial scarce resource became abundant, value migrated up the stack, and the winners were those who built compelling systems on top of the now-cheap substrate.

We are, already, in the PC moment of artificial intelligence. At least for this round of the AI hype. The raw intelligence of frontier models is becoming abundant and interchangeable. The race is now for the platform: the agentic infrastructure, the integration ecosystem, the developer experience, and the enterprise data layer that turns a capable model into an indispensable system.

The question isn’t ‘which LLM is best?’ but rather ‘which combination of tools best serves your specific workflow?